Though disasters come in many forms, two things are always a top concern when they hit: how quickly you want to recover and how little data you want to lose.

Recovery time and data loss prevention objectives are also known as Recovery Time Objectives (RTOs) and Recovery Point Objectives (RPOs), respectively. While both are crucial to disaster recovery (DR) and business continuity, they play different roles in the DR process.

In this article, we’ll compare RTOs to RPOs and explain their key points and differences. We’ll also explain how you can meet and incorporate them into your recovery and continuity planning. Or, if you’d like to skip to the chase, please check out Resilio solution for Disaster Recovery.

What is an RTO?

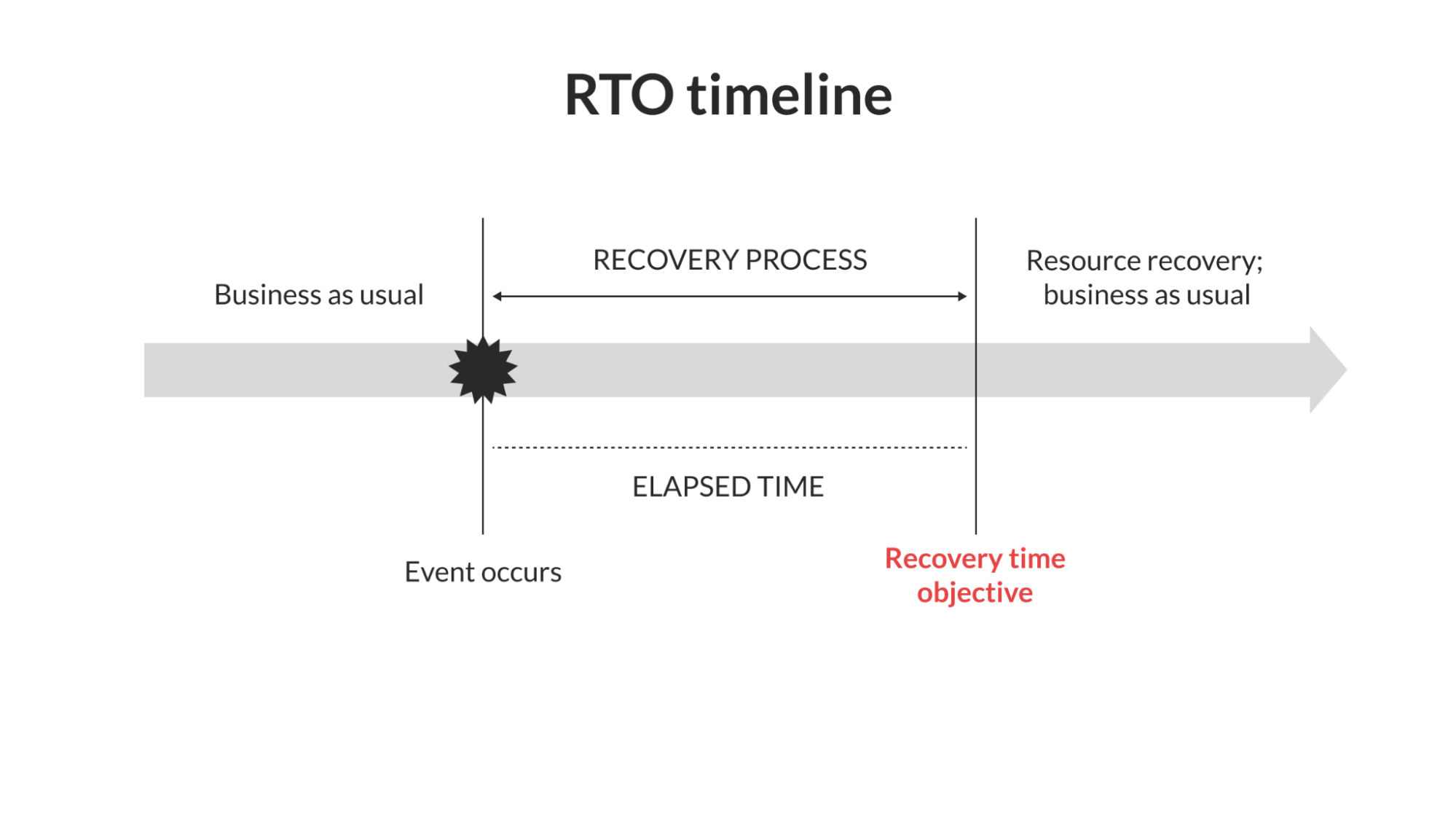

A Recovery Time Objective (RTO) is the maximum length of time it should take to recover after an event such as an outage or data loss.

As you might imagine, having a tight RTO is a key goal for any IT infrastructure — especially those that support applications with high uptime requirements.

Recovery time as a benchmark

When a system goes down in an unplanned outage, the organization’s RTO serves as a benchmark for how quickly it should recover and resume normal operations.

RTOs vary depending on two major factors:

- Cost of downtime

- System or application priority (or tier)

These two factors are highly connected. While most organizations aim for RTOs set to within an hour, systems with the highest priority (Tier 1) might have RTOs as low as 5-10 minutes.

Conversely, RTOs for less critical (Tiers 2 and 3) systems might be as long as four hours or even up to an entire day. Again, it all comes down to the organization’s cost of downtime, which can include things like loss of revenue, lost data, or risk to life in the case of medical technology.

Note that RTOs aren’t directly concerned with data loss — that’s more the job of RPOs, which is why RTOs and RPOs are both crucial to DR plans. We’ll cover RPOs in depth later on, but know that RTOs can have an indirect impact on RPOs if the time to recovery impacts the data loss in some way.

Calculating RTOs

As mentioned previously, calculating RTOs depends on the cost of downtime and system priority.

Most organizations use a system’s priority or tier as the starting point, as this usually informs the cost and impact of downtime. Typical tiers and their respective RTOs include:

- Tier 1 RTO: 10-15 minutes. Tier 1 includes critical infrastructure, such as that used for retail and other customer-facing and transactional applications. While some Tier 1 RTOs can be up to an hour, they should always be as short as possible.

- Tier 2 RTO: 1-3 hours. Tier 2 systems are very important to a business but not quite as critical as Tier 1 systems. In other words, while downtime won’t immediately impact revenue, it may impact productivity.

- Tier 3 RTO: 4-24 hours (or longer). Tier 3 systems are those that the organization can afford to bring back online over several hours or days. While that doesn’t mean they aren’t important, Tier 3 systems may simply be less important than others in some situations.

Of course, these are only guidelines. One organization’s Tier 1 RTO might not take as long as another’s.

For example, an insurance company’s customer portal (a Tier 1 system) might have an RTO exceeding one hour, as an hour or two of downtime likely won’t affect revenue in a significant way. However, an hour would be much too long for a major online retailer, where even five minutes of downtime can mean thousands of dollars in lost revenue.

Assigning RTOs

It’s one thing to have an RTO of 10 minutes — but it’s another thing to actually meet it.

Unfortunately, calculating and setting an RTO isn’t quite as simple as saying, “This system should be fully recovered within 15 minutes of an outage.” Not only is that easier said than done, but certain events might make meeting RTOs extremely difficult or impossible. Your IT architecture plays a big roll–from storage architecture to applications. But one thing is certain. Being prepared helps. Whether that be through an on-prem or cloud DR solution.

Fires and natural disasters are extreme examples, of course, but they raise an important point. Rather than making your RTOs firm requirements, they’re better used as general guidelines for deciding how many resources to allocate to certain systems in an emergency.

But which systems deserve the most resources? While we’ve already covered system tiers and their respective RTOs, it may not always be clear which system is in which tier. While Tier 1 systems tend to be pretty obvious, what makes a Tier 2 or Tier 3 system is often subjective.

While there isn’t one answer, the following strategy can help match your RTOs to the right systems.

1. Take a complete inventory of your systems. What does your IT infrastructure look like? Are you using an on-premises system, a cloud-based solution, or both? How many systems do you have, and who supports them? These are just a few of the questions you should ask to not only clarify your topology but also to understand (a) what’s at risk and (b) the unique specifications and recovery requirements of each system.

2. Assess the priority of each system. Even if you’ve already assigned system priorities or tiers, they’re still worth regular review — especially as things change. You may also want to prioritize systems and applications that are necessary to the recovery process, such as any file synchronization or backup tools you may use. Generally, you should prioritize systems that will have the greatest impact on recovery time.

3. Understand potential risks to your systems. Understanding the inherent risks to your systems and applications is crucial for mitigating them. For example, on-premises systems stand the risk of downtime and data loss in the event of a power failure. In such a case, meeting your RTOs might mean having a backup power supply.

4. Review recovery solutions and capabilities. What tools will you use in the event of an outage? Who is responsible for what? Does each system have different recovery tools or personnel? In any case, you should know what tools and resources are at your disposal in an emergency — as well as their limitations.

5. Assign RTOs and resources carefully. With a complete view of your infrastructure from a disaster recovery perspective, you can finally assign your RTOs based on system priority and available resources. Though Tier 1 systems should almost always get immediate attention, don’t neglect Tier 2 systems that might be necessary for recovery efforts.

Cost of meeting RTOs

Quick recovery times can come at a cost.

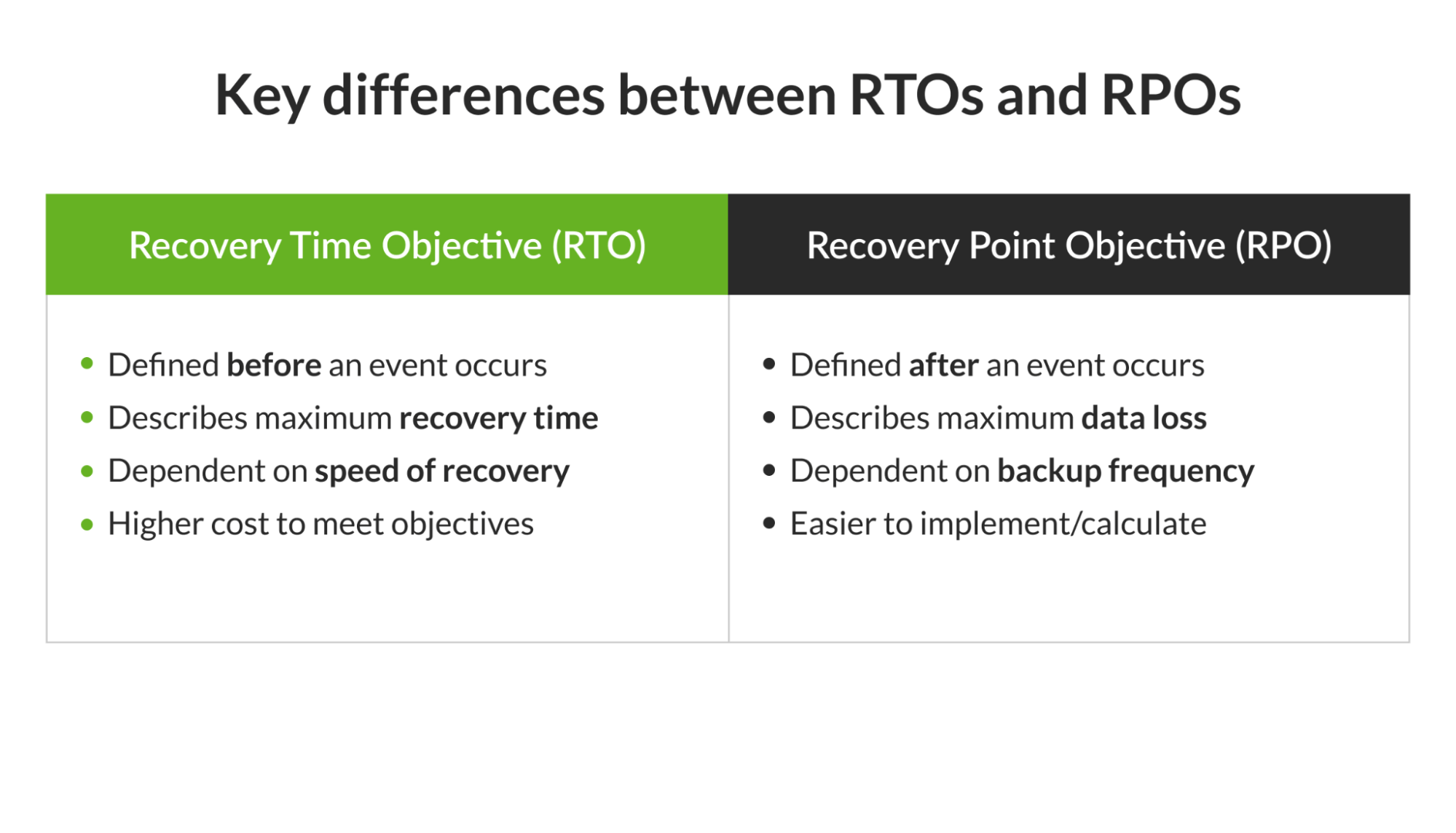

Generally, the more stringent the recovery objective, the costlier it is to meet. This is true for both RTOs and RPOs — whereas meeting RPOs requires budgeting for frequent backups, meeting RTOs requires budgeting for anything that may help minimize downtime. That could mean maintaining redundant servers or using file synchronization tools.

As you might imagine, meeting RTOs is generally much costlier than meeting RPOs, as RPOs usually just require maintaining frequent backups. By contrast, RTOs often involve enterprise-wide efforts involving a combination of tools, infrastructure, and personnel — all of which must be available at a moment’s notice.

Without the right recovery tools in place, even the most well-intentioned RTOs can end up spanning several days.

One Resilio customer was able to solve this exact problem with Resilio Connect. By replacing their slow DFS replication with Resilio’s file synchronization tools, the company was able to reduce their three-to-four-day recovery time to mere minutes.

What is an RPO?

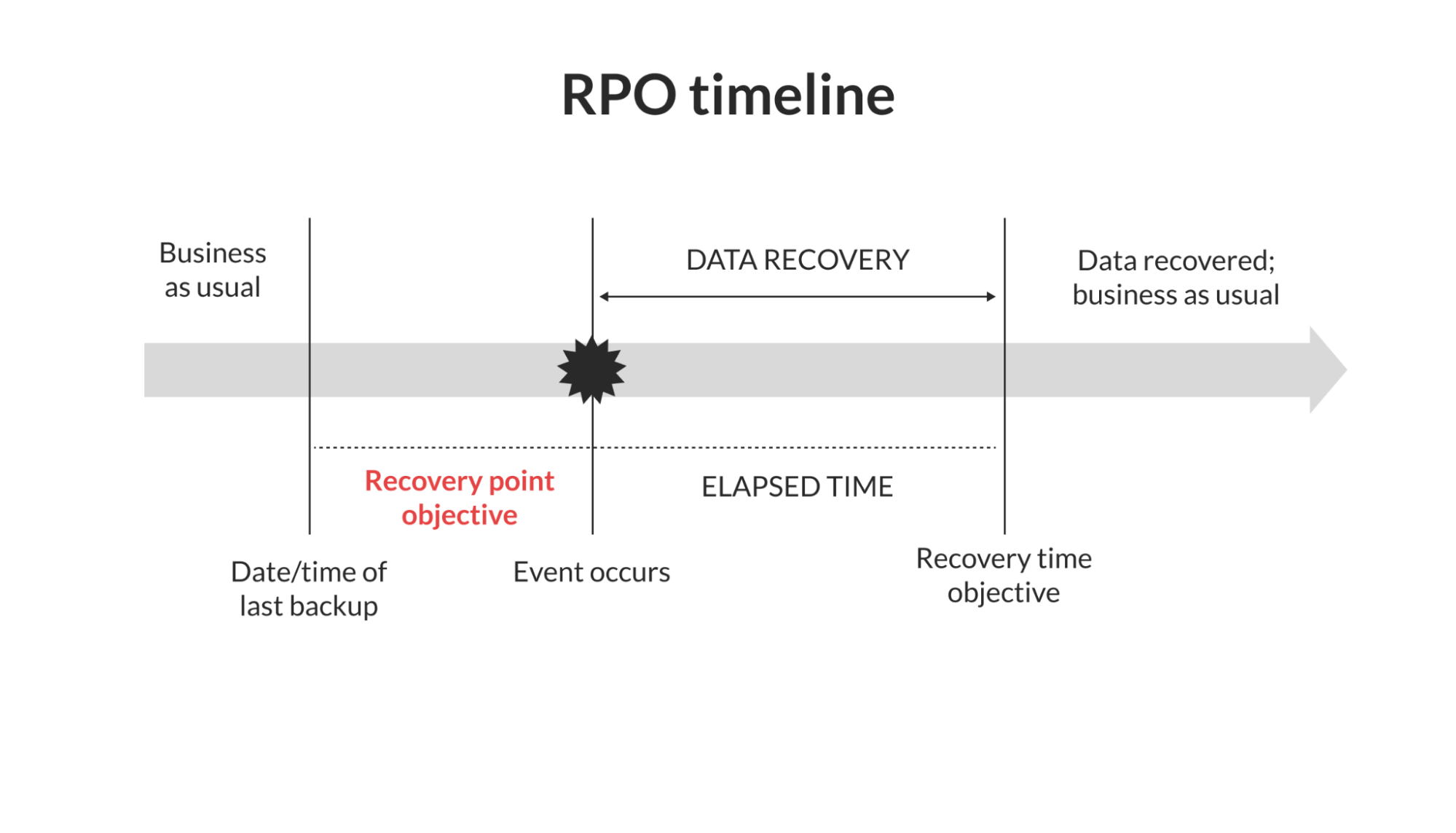

A Recovery Point Objective (RPO) is the maximum data loss tolerance an organization can withstand after an event.

Though data loss and recovery time are often interdependent, an RPO focuses on data loss alone. Even if a slow recovery time results in additional data loss, all we’re worried about here is how much data was lost.

Of course, that doesn’t mean recovery time and RTOs don’t play a pivotal role in meeting RPOs. But before we get into that, let’s explore what it means to use data loss as a benchmark.

Data loss as a benchmark

When a system or application experiences an outage or suffers from data loss, the organization’s RPO sets a benchmark for how much data it can stand to lose — and how much it can recover.

Like RTOs, RPOs use the cost of data loss as a major factor. However, since data impacts everything from personal information to regulatory compliance, the factors for determining RPOs are slightly more varied.

Here are a few major factors that might impact your RPOs.

- Cost of data loss and recovery

- Maximum tolerable data loss

- Storage options

- Industry-specific data factors (e.g., processing sensitive financial data)

- Regulatory compliance (especially as it impacts recovery processes/requirements)

The most important of these factors are the cost of data loss and the maximum tolerable data loss. Of course, these two factors are closely linked, as increasing data loss can come with increased costs.

Your storage options will also have a significant effect on your RPOs. For example, an on-premises server with hourly backups will have a significantly larger RPO than a cloud solution with backups every minute. In any case, the capabilities (namely backup frequency) of your storage solutions will place a fairly strict limit on your RPOs.

Depending on your industry, your RPOs may also be subject to industry-specific regulatory requirements. For example, regulations and standards such as the General Data Protection Requirement (GDPR) and ISO 27001 usually require some form of disaster recovery planning (read: RPOs) and reliable data access. Inadequate RPOs can cause organizations to fall out of compliance in these areas.

Calculating RPOs

So now that we know what affects RPOs, what do RPOs actually look like?

Just like RTOs, RPOs vary depending on system priorities and, more accurately, the importance of the data itself. However, since we’re dealing in terms of data loss rather than time to recovery, calculating RPOs comes down to two major points:

- The amount of data lost

- The systems with the most critical data

Unlike RTOs, however, RPOs can’t be grouped into generalized tiers (10 minutes for Tier 1, 1 hour for Tier 2, etc.). Much of this is because the maximum tolerable data loss varies widely between organizations.

For example, a small accounting firm working with an Excel spreadsheet could have an RPO in the order of kilobytes, since most of the data lost would be spreadsheet entries since the last backup. By contrast, the RPO of a game development company could easily be in the order of gigabytes (or even terabytes) due to the high volume of media-rich files they work with.

When it comes to calculating your own RPOs, you should consider the average amount of data you process or add between backups. As you can probably imagine, frequent backups are the key to a small RPO — after all, you probably won’t lose much data in an outage if your backups are only minutes apart.

Assigning RPOs

Assigning RPOs is relatively simple: If you know how much data gets changed or added between backups, then you probably have a good idea of your RPOs.

However, it’s not always so simple. Most organizations deal with a wide range of diverse systems and storage solutions, each of which may have its own frequency of backup. As a result, each system usually has its own inherent RPO, making assigning RPOs more a matter of system analysis rather than strategic planning.

In any case, the following procedure can help you determine the right RPOs for different systems in your infrastructure:

1. Segment your infrastructure by types of data storage. Whether you have a single on-premises server or a vast hybrid model of physical and cloud servers, it’s useful to gather a top-down view of how many different ways you store (and, more specifically, back up) your data. These segments will help inform how you allocate RPOs later on.

2. List the backup frequency of each storage system. Every discrete storage system will have its own backup capabilities — even between two of the same type of system (e.g., two cloud-based servers). Here, your RPOs will likely be determined by the systems with the least backup frequency. For example, even if you have a few lightning-fast cloud servers, your RPOs could be limited if your most critical data is in a slower, on-premises server with less frequent backups.

3. Calculate the volume of data changed between backups. The previous step determines the time between backups. This step determines how much data changes between backups. This is a crucial layer of distinction, as a high backup frequency means little to your RPOs if there’s very little data being processed. On that merit, your most crucial systems will be those in which a high volume of data is processed between backups.

4. Consider the ease of reverting to backups. Even if you have frequent backups, how easy is it to revert to the preceding backup in an emergency? While systems with frequent backups usually have equally quick rollback capabilities, it’s an important factor to consider for systems where restoring a backup could take an extended time period (and even impact RTOs).

5. Identify opportunities for increasing backup frequency. No matter how much or how little data your systems process, increasing backup frequency will always be key to a small RPO. As you walk through your systems and their backup capabilities, try to identify opportunities for implementing file synchronization and other tools for narrowing the gap between backups.

Cost of meeting RPOs

Meeting RPOs is generally much less expensive than meeting RTOs.

Whereas meeting RTOs requires cooperation across an entire organization, meeting RPOs usually comes down to increasing backup frequency and reliability in individual systems. As a result, the cost of meeting RPOs is tied to the cost of the systems themselves.

For example, if you have an on-premises server or an otherwise slow distributed file system (DFS), then meeting your RPOs may require paying for upgraded file synchronization or migrating to a different infrastructure altogether. However, that doesn’t mean the cost of doing so will be purely to meet your RPOs — instead, meeting RPOs is a natural result of investing in more efficient, reliable systems.

To sum up, here are the key differences between RTOs and RPOs:

Now let’s look at how you can meet RTOs and RPOs in the next section.

5 tips for meeting RTOs and RPOs

Though meeting RTOs and RPOs are two different things, they share several common strategies.

In many cases, backup capabilities and time to recovery are closely linked. If you have frequent, readily available backups, then both your data loss (RPO) and recovery time (RTO) will be relatively small.

Consider the following tips in your disaster recovery strategy to meet both RTOs and RPOs:

1. Fine-tune your backup parameters

Your system’s backup parameters are arguably the most important factor in meeting your RPOs — and, in some cases, your RTOs as well.

Though the exact backup parameters you choose will vary depending on your data, industry, and requirements, it’s best practice to have at a minimum:

- Multiple versions of your data. A reliable backup solution will maintain multiple versions of your data for a certain period of time. Beyond being able to revert to the most recent version in the event of a disaster, it’s also useful (and often necessary) for auditing, compliance, and more significant rollbacks.

- More snapshots for more critical data. Let’s face it: Some data is more critical than others. Less-than-critical data probably doesn’t need a new snapshot every five minutes. Instead, save your snapshots and storage for only the most critical/valuable data.

- A 90-day retention plan. Though you don’t need to retain previous data for exactly 90 days, it’s a good benchmark that will make most systems reliable and compliant with regulations.

- Utilize a real-time sync solution to immediately replicate file changes across sites.

2. Optimize your recovery processes

You may have a nice reserve of data backups and even some recovery policies and procedures. That’s a great start, but it may not be enough.

Your recovery processes are only as good as your (and your organization’s) familiarity with them in a disaster. In other words, if your recovery processes aren’t clear or fine-tuned, then all of your good planning is useless — and your RTOs and RPOs will be long gone.

To avoid scrambling at the last minute, regularly review and practice your recovery processes with everyone involved. And don’t set your recovery processes in stone, either: look for feedback from those involved and try to identify room for improvement or areas where new tools can improve recovery time.

3. Budget storage and snapshots wisely

In a perfect world, we could simply maintain backups and snapshots in real time so that we could instantly recover from any disaster.

Unfortunately, this is more or less impossible short of some very high-end cloud solutions. As a result, you’ll need to budget your storage and snapshots wisely, as these will both come at a cost to data processing, storage, and so on.

Besides giving more resources to more critical data, it may also help to keep older backups in local storage to minimize load on live systems. This practice is becoming especially economical as large-capacity hard drives become more affordable.

4. Maintain a 3-2-1 backup strategy

If there’s one concrete rule of backups, it’s that you should always maintain a 3-2-1 backup strategy. That means keeping at least:

- 3 copies of your data

- 2 copies stored in separate locations

- 1 copy stored off-site

Note the “at least” phrasing here — the 3-2-1 rule is the bare minimum for reliable backups.

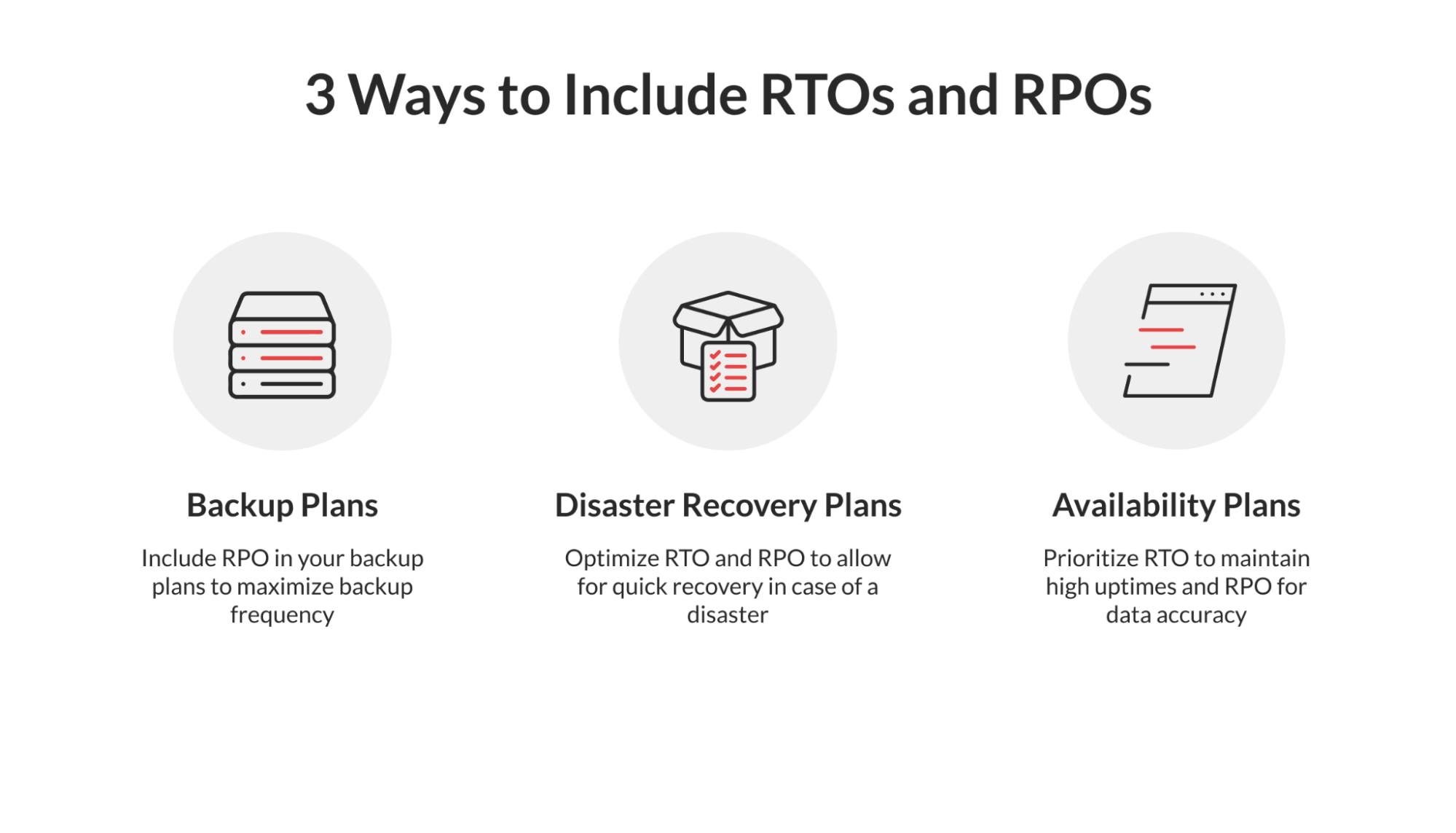

5. Add RTOs/RPOs to your disaster recovery and business continuity plan

It may seem obvious, but the practice of adding RTOs and RPOs can make a big difference in your recovery planning.

Beyond setting expectations, the process of calculating and assigning recovery objectives can give you a better idea of your recovery capabilities — information that can better inform your recovery procedures and help you identify areas for improvement.

But which objectives go where? While RTOs and RPOs are key to most recovery and continuity plans, they’re especially effective in the following areas.

- Backup plans: Include RPOs in your backup plans

- Data loss prevention: Optimize RTOs and RPOs to allow for quick recovery

- Business continuity/availability: Prioritize RTOs to maintain uptime

Note that simply adding RTOs and RPOs to your plans is only the start. As we mentioned earlier, it’s crucial to regularly verify and update these plans to help you stay prepared and informed of new risks and realms.

Protect Files and Recover Faster with Resilio Connect

From getting your systems back online to restoring the most recent backup, speed and efficiency are the keys to meeting your RTOs and RPOs.

Resilio’s low latency, real-time file replication solution for DR protects files of any type and size across sites to meet sub-five-second RPOs and RTOs within minutes of an outage. Resilio’s highly resilient, peer-to-peer (P2P) architecture eliminates single points of failure to protect files in production environments in the event of an outage. Organizations may use any type of server, storage, on-prem data center, or cloud.

Ready to meet — or even beat — your RTOs? Contact Resilio today to learn more and schedule a free demo.